A buyer's guide to AI in health and care

Download full PDF (PDF, 8.3 MB)

This page provides a summary of "A buyer's guide to AI in health and care".

The exciting promise of artificial intelligence (AI) to transform areas of health and care is becoming a reality. Prospective vendors of AI are proliferating, but working out the difference between their different technologies, assuring they’re safe and effective, and deciding whether a specific AI product is right for your organisation is not easy.

This guide sets out important questions you need to consider to make well-informed decisions about buying AI products.

The assessment template which accompanies the guide will help you answer these questions.

Ten questions you should consider

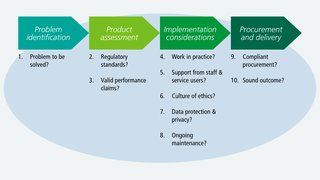

The guide takes you through ten questions categorised under:

- problem identification

- product assessment

- implementation considerations

- procurement and delivery.

If you need more guidance, feel free to contact the AI Lab at ailab@nhsx.nhs.uk - we’ll be able to signpost you to further support.

Overview

- What kind of AI products this guide applies to

- What problem are you trying to solve, and is AI the right solution?

- Does this product meet regulatory standards?

- Does this product perform in line with the manufacurer's claims?

- Will this product work in practice?

- Can you secure the support you need from staff and service users?

- Can you build and maintain a culture of ethical responsibility around this project?

- What data protection protocols do you need to safeguard privacy and comply with the law?

- Can you manage and maintain this product after you adopt it?

- Is your procurement process fair, transparent and competitive?

- Can you ensure a commercially and legally robust contractual outcome for your organisation, and the health and care sector?

What kind of AI products this guide applies to

This guide is concerned with procuring ‘off-the-shelf’ AI applications - i.e. products packaged by vendors ready for deployment. It does not focus on bespoke projects - i.e. research or build collaborations between health and care organisations and developers. Though even products labelled as off-the-shelf will need customising to meet the specific needs of an organisation.

What problem are you trying to solve, and is artificial intelligence the right solution?

You should start with the problem you’re trying to solve. Once you’ve identified the problem, can you explain why you are choosing AI? What additional ‘intelligence’ do you need and why is AI the solution? You should consider whether:

- the problem you’re trying to solve is associated with a large quantity of data which an AI model could learn from

- analysis of that data would be on a scale so large and repetitive that humans would struggle to carry it out effectively

- you could test the outputs of a model for accuracy against empirical evidence

- model outputs would lead to problem-solving in the real world

- the data in question is available - even if disguised or buried - and can be used ethically and safely

If you can’t satisfy these points, a simpler solution may be more appropriate.

Appropriate scale

You should consider the appropriate scale for addressing your challenge - i.e. organisational, system, regional or even national. Organisations may experience specific challenges in common, making collaboration valuable.

Working at a system-level, for example, may offer economies of scale through ‘doing things once’ and increased buying power. Key to this decision is the data required for the AI solution and at what scale the dataset is sufficiently large to ensure a minimum level of viability. It may be that data needs to be pooled across several organisations to achieve this.

Making a credible business case

Like any investment, you’ll need to produce a business case to justify expenditure. But owing to the experimental nature of many AI projects, this is not straightforward.

Testing hypotheses on historical data, and then through a pilot project, may help you through some of the uncertainty.

Does this product meet regulatory standards?

CE marking

The Medicines and Healthcare product Regulatory Agency (MHRA) has overall responsibility for ensuring that regulatory standards for medical devices are met. A manufacturer must ensure that any medical device placed on the market or put into service has the necessary CE marking. CE marking should be viewed as a minimum threshold for certifying that the device is safe, performs as intended and that the benefits outweigh the risks. From 1 January 2021, because of the UK leaving the EU, there will be several changes to how medical devices are placed on the market. These changes include introducing the UKCA - UK Conformity Assessed - as a new route to product marking.

Intended use and risk classification

You should be clear about the ‘intended use’ of the product:

- what exactly it can be used for

- under what specific conditions it can be used

This should enable you, in turn, to be clear about the product’s risk classification. Medical devices can be classed as I, IIa, IIb or III in order of increasing risk. The requirements for obtaining a CE mark are similar across all the classes but the complexity and amount of effort increases as the risk class increases.

Products that are not medical devices

Where an AI product is not categorised as a medical device and is designed, for example, to improve efficiency rather than support specific clinical or care-related decision-making, regulations require manufacturers to develop their technology in line with ISO 82304 for healthcare software.

Does this product perform in line with the manufacturer’s claims?

As part of your procurement exercise, you’ll need to scrutinise the performance claims made by the manufacturer about the product.

Metrics should be aligned to intended use

The performance expected of a supervised learning model will vary in line with its intended use.

For example, in the case of classification models used for diagnosis, there’s an important trade-off between:

- sensitivity - the proportion of actual positive cases correctly identified

- specificity - the proportion of actual negative cases correctly identified

The trade-off depends on weighing up the healthcare consequences and health economic implications of missing a diagnosis versus over-diagnosing. This trade-off may vary at different stages of a care pathway.

Different metrics will shed light on different aspects of model performance and all have limitations. Different metrics will be more or less appropriate to different use cases.

Performance of classification models

Classification models often provide a probability between 0 and 1 that a case is positive, as opposed to a binary result. Discrete classification into positive and negative is obtained by setting a threshold on the probability. If the value exceeds the threshold, the model classifies the case as positive. If the value does not exceed the threshold, the case is classified as negative.

You should pay attention to the chosen threshold, particularly as performance metrics will change according to where the threshold has been set. Given the complexity of these metrics, the Area Under the Curve (AUC) is a helpful measure. It’s a single metric which evaluates model performance without taking into account the chosen threshold.

Performance of regression models

Whilst classification models predict discrete quantities (i.e. classes), regression models predict continuous quantities - e.g. how many residential care beds will be needed for patients discharged from hospital next week.

Performance metrics for regression models are affected differently by the presence of outliers in the data. You should consider how much of an issue outliers are for your use case, and then prioritise metrics accordingly.

Validating the model’s performance

You should request details of validation tests that have been performed, and should expect to see a form of retrospective validation. This entails testing the model on previously collected data that the model has not seen. The purpose of this is to test whether the model can generalise - i.e. carry across - its predictive performance from the data it was trained on to new data.

Ensuring the model is safe

Investigating the model’s safety credentials is key - you need to be confident that the model is:

- robust

- fair

- explainable

- resilient against attempts to compromise an individual’s privacy

Assessing comparative performance

For any reported performance to be meaningful, it must be compared to the current state of play. For example, how does model performance compare with the product it will replace, or with the human decision-making it will augment?

Will this product work in practice?

The key issue is if the performance claims made in theory will translate to practical benefits for your organisation.

Evidence standards for effectiveness

The NICE Evidence Standards Framework for Digital Health Technologies sets out the evidence standards you should expect to see as evidence for a product’s effectiveness. These standards are stratified according to a product’s function and potential risk. In the case of medical devices, clinical evaluation reports (CERs) are the primary source of clinical evidence to support the claims of a manufacturer.

During procurement, you could ask the manufacturer about the product’s effectiveness in other health and care organisations, and for contact details of people in those organisations involved in the product’s implementation.

Issues affecting your project’s delivery

You should think about questions affecting project delivery such as:

- How ‘plug-in’ ready is the product and whether your organisation will need to make significant changes to realise the promised benefits.

- How smoothly the product operates for users and how well it meets user needs.

- How your existing systems will integrate with the new technology to ensure clear and reliable workflows.

- What are the product’s data requirements, and does your organisation have the necessary data - labelled, formatted and stored in the right way.

- What data storage and computing power does the product need, and how will you ensure this is in place.

- How will you monitor whether your product is achieving in practice the benefits anticipated in theory?

Can you secure the support you need from staff and service users?

The breadth and depth of multidisciplinary backing needed for AI procurement is greater than for the procurement of a more traditional technical product.

Involving staff fully

You should involve staff who will be end-users of the AI solution in every stage of the procurement process, to ensure that the product will meet their needs.

Changing the way people work

At the implementation stage, influencing and supporting people to change the way they do their work is difficult. Make sure you factor this into your plans and that your prospective vendor will supply any induction or training that you need.

Communicating clearly and persuasively

Can you tell a persuasive story about the expected improvement in health and care? This will be important for getting buy-in from patients and service users.

You should design a ‘no surprises’ communication approach about:

- how the AI product is being used

- how patients’ and service users’ data is being processed

- where relevant, how an AI model is supporting decisions which impact on patients and service users

Can you build and maintain a culture of ethical responsibility around this project?

Ethics, fairness and transparency are vital for AI in health and care. Building a culture of ethical responsibility is key to successful implementation. Health and care staff who don’t experience a technology being in keeping with their professional ethical standards are less likely to adopt it.

You should ensure your AI project is:

- ethically permissible

- fair and non-discriminatory

- worthy of public trust

- justifiable

To help you make an informed judgement, you should carry out a stakeholder impact assessment before making any procurement decisions.

What data protection protocols do you need to safeguard privacy and comply with the law?

Data protection must be embedded into every aspect of your project. You’ll need to create a data flow map that identifies the data assets and data flows - i.e. the exchanges of data - related to your AI project.

Data governance

Where the data flow map identifies data being passed to and processed by a data Processor (i.e. the vendor) on behalf of a data Controller (i.e. your organisation), you’ll need a legally binding written data processing contract - otherwise known as an information sharing agreement.

Further information governance measures depend on the purpose of the data processing and whether the data being processed could identify individuals. If individuals can be identified, this is sensitive personal data and you must complete a Data Protection Impact Assessment.

Rights of individuals over the use of their personal data

Where identifiable data is being processed, individuals have the right to:

- be informed about how their personal data is collected and used

- give consent to the use of their data

- access their data

You should ensure that use of data for the AI project is covered by your organisation’s data privacy notice. You’ll also need to document what’s in place to mitigate the risk of a patient or service user being re-identified - in an unauthorised way - from the data held about them.

Can you manage and maintain this product after you adopt it?

Support from the vendor

During procurement, you should investigate what support the vendor is offering for ongoing management and maintenance of the product and ask about their post-market surveillance plans - i.e. plans to monitor the safety of their device after it has been released on the market. This includes asking about:

- features of their managed service

- their approach to product and data pipeline updates

- their plan for mitigating product failure

- their plan for addressing performance drift of the model outside of a margin that is acceptable to you

You should be clear about your organisation’s responsibilities and capabilities in relation to operation and maintenance.

Sending back data to the vendor

You should clarify if the vendor expects your organisation to send back data to support the vendor’s iteration of the model or development of other products. Depending on the product, this could be categorised as a clinical investigation and require separate approval. You will also need to address this in your information governance arrangements.

Decommissioning

Decommissioning is a key final stage of the management and maintenance cycle. You should ensure that suitable plans are in place at the outset.

Is your procurement process fair, transparent and competitive?

Like any technology, AI products need to be purchased on the basis of recognised public procurement principles. Early engagement with the market may identify new potential vendors, level the playing field and help vendors understand what buyers need. At the same time, you should be clear about and document your justification for talking to and inviting specific vendors to bid.

Can you ensure a commercially and legally robust contract for your organisation, and the health and care sector?

This guide does not provide a comprehensive treatment of commercial contracting, but these are key questions to consider:

- Are you clear about exactly what you are procuring?

- Is it a lifetime product?

- Is it a licence?

- What is the accompanying support package?

You should set out a service level agreement as part of your contracting process. You should also ensure that the financial arrangements you’re establishing are sustainable in the long-term.

Open contracting

In principle, your contracting should be as open as possible. Whilst confidentiality clauses are often invoked to prevent disclosure of commercially sensitive information, this can be detrimental to public trust.

Recognising the value of data

Your contracts should recognise and safeguard the value of the data that you are sharing, and the resources which are generated as a result.

Where your organisation sends back data to the vendor - whether for the purpose of auditing the product, re-training the model, or potentially developing a new product - you may be contributing to the creation of intellectual property. You should take advice early to address this appropriately. NHSX’s Centre for Improving Data Collaboration can offer tailored guidance - please contact the Centre at improvingdatacollaboration@nhsx.nhs.uk.

Liability should anything go wrong

Liability issues should not be a barrier to adoption of effective technology. However, it’s important to be clear on who has responsibility should anything go wrong. Product liability and indemnity is therefore an important issue to address at the contracting stage.

In the case of data protection, your organisation - as a data Controller - is primarily responsible for its own compliance but also for ensuring the compliance of its data Processors. A Controller is expected to have measures in place to reduce the likelihood of a data breach, and will be held accountable if they have not done this.

Download full PDF (PDF, 8.3 MB)