Creating an international approach to AI for healthcare

Download full PDF (PDF, 3.9 MB)

The use of artificial intelligence (AI) in health systems has accelerated globally with different applications being tested and put into practice across diverse areas of health system design and delivery. The COVID-19 pandemic has led to additional deployment of AI-based data driven technologies (referred to as AI-driven technologies hereafter) in health at both national and local levels. However, AI-driven technology development in healthcare is outpacing the creation of supporting policy frameworks and regulation.

The NHS AI Lab was commissioned by the Global Digital Health Partnership (GDHP) to understand the current use of AI-driven technologies across member countries, and the supporting policies and regulations in place. By doing so, the NHS AI Lab was able to identify gaps and opportunities for international governance and coherence towards ensuring AI-driven technologies are properly governed, regulated and used for maximal benefit in health systems.

The resulting white paper AI for healthcare: Creating an international approach together is a step towards providing much needed policy guidance to the international health community. Building on rapid literature and policy reviews, interviews with GDHP member countries, and a focus group with experts in digital health, the white paper includes a set of policy recommendations on how best to support and facilitate the use of AI-driven technologies within health systems. The policy recommendations are presented at a high level, so to be applicable regardless of a country's digital health maturity level.

The authors hope the international health community can use the policy recommendations as a base as they create national and regional approaches to developing and using AI-driven technologies in their health system.

Read our full white paper: AI for healthcare: Creating an international approach together (PDF 3.9MB).

Taking an AI life cycle approach

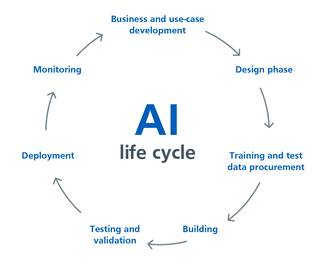

Any policy recommendations or frameworks for the use of AI-driven technologies in healthcare need to cover the whole AI life cycle. The illustration below shows the AI life cycle, which includes:

- business and use case development

- design an algorithm and product

- procuring training and test data

- Building the algorithm and/or product

- testing and validating the algorithm

- deploying the AI

- monitoring its performance

As described, the development of AI-driven technologies in this life cycle is an iterative process involving scoping, designing and building, then deploying the AI-driven technology with continuous monitoring, followed by improvement as and when the need arises. In order to implement AI-driven technologies successfully within a health system, countries need to consider and support each step in the AI life cycle.

Spanning across the AI life cycle, this white paper outlines four strategic categories and associated policy recommendations to support policymakers to implement AI-driven technologies into their health system:

1. Leadership and oversight

The successful implementation of AI-driven technologies into health systems requires leadership that can deliver the required digital maturity of the health system, regulatory environment and skilled workforce to make the most of AI products. Leaders must also be able to communicate clear use cases for integration of AI into health settings.

The variation in the GDHP countries' health system, as well as their digital maturity, led to a broad range of oversight mechanisms for the use of AI, and indeed technology as a whole, in healthcare settings. While all countries stated that they had an organisation or body responsible for digital health, there was little consistency in how these bodies were organised, their remit or statutory powers.

Yet there was consensus amongst GDHP members that health system leaders need to set a vision for the use of AI in healthcare at a national level, with room for local interpretation and implementation. It was stressed that high-level strategic vision should be based on clearly identified areas within a country’s health system where AI-driven technologies could potentially bring the greatest benefit to population health.

2. Ecosystem

There is a continued need to address the entire ecosystem of AI research, development and implementation rather than just one aspect of the AI life cycle in order to create a facilitative environment for AI-driven technologies to thrive and be scaled. This ‘begins’ with data curation and aggregation of representative datasets, as well as timely and appropriate access to them. The need for improved data quality and access is well recognised in the UK with the NHSX Centre for Improving Data Collaboration having been set up to create a facilitative environment for data sharing.

Many countries highlighted they needed support to progress AI research beyond early stage algorithmic training and into clinical environments. Support includes facilitative policies, funding streams, and cross sector collaboration. GDHP countries also described a desire for technology, research and clinical expertise to be co-located to catalyse progression, as well as ensure that clinical considerations are integrated throughout the AI development process. In the UK, the innovation hubs facilitated by Health Data Research UK are examples of such multidisciplinary working at a local level, supported by central policy and infrastructure.

3. Standards and regulation

Ensuring the safety and quality of AI-driven technologies requires the development of rigorous standards that facilitate comprehensive regulation and are coherent across the international landscape.

A lack of standardisation can result in a range of issues, including quality assurance, ethical concerns, and patient safety concerns. For example, there is no guarantee that an AI model trained and designed in one country or setting will achieve the same levels of efficacy in another. AI developers may also need to create different products for different national contexts, potentially hampering market competition, and could choose ‘where’ is most convenient to develop their product based on the minimum level of expectations.

Implementation of these standards requires adequate regulatory oversight, with appropriate processes and people in place to monitor adherence both before and after deployment. All of the countries interviewed described encountering complex issues when trying to regulate AI-driven technologies for healthcare. Issues ranged from determining the scope of AI regulation, to confusion on where responsibility for each stage should lie, to ensuring that the right skills were available amongst the regulatory workforce. Not only would international policy making in this area reduce the burden on national governments, but it would bring consistency that would allow countries to use each others’ regulatory approval to augment their own processes.

4. Engagement

There is a clear need for meaningful engagement with patients, the public and healthcare professionals in decision making around AI-driven technologies in healthcare.

Overwhelmingly, GDHP countries stressed the need for transparency, including evidence and rationale, for why one AI-driven technology might be approved and another not, to be publicly shared. A number of participants mentioned that they had struggled to achieve success with engagement activities conducted to date, and there was a feeling that meaningful public engagement was often limited by the heterogeneity of the population with a risk of the most vocal and/or digitally literate groups monopolising the conversation.

While these concerns are valid, deliberative engagement events such as Citizens Juries, which offer a supportive environment for participants to discuss their views, can provide an opportunity to gather insights around specific case studies. Citizens Juries have already been used in the UK to capture public opinion on the use of health data.

The research also confirmed that investment in educating healthcare professionals, policymakers and the general public on what AI is and how it can augment healthcare delivery is essential for engendering trust and assuring successful adoption of AI-driven technologies.

Summary of recommendations

Leadership and oversight

Define the need: Countries need to take a “needs-based” approach to setting the vision and direction for the use of AI-driven technologies within their health system. A country's use of AI should be based on the problems and opportunities in the health system where AI-driven technologies could have the most impact to improve people’s health outcomes.

End-to-end oversight: Oversight of AI-driven technologies within a health system needs to cover the whole AI life cycle, alongside other supporting activities such as research, funding, and workforce development.

Provide regulatory clarity: Regulatory clarity is required both within and between countries to enable AI developers to understand and manage the risk of introducing AI-driven technologies into a health system.

Ecosystem

Aligning innovation with healthcare need: Setting of research priorities for AI, and associated allocation of funding, should be based on the needs of patients and the health system.

Access to quality data: Countries should work to aggregate and link data from across their health and social care system, to create high quality repositories for analysis by accredited researchers, with provision of secure analytics environments and/or with appropriate mechanisms for data extraction in place.

Deployment pipeline: The translation of AI research into digital healthcare applications should be supported by a robust deployment pipeline.

Working across sectors: There should be exploration of public-private partnerships to address relevant skills and funding gaps that are preventing and stalling AI-driven technology development, while safeguarding the interests of patients and the health system.

Standards and regulations

Clear and comprehensive AI standards: There is a need for national standards to set minimum evidence and expectations for the entire AI life cycle, which should, where possible, be co-created with relevant disciplines and industries.

International standards for benchmarking: International standards should be developed to promote collaboration, with guidance for adaptation to national contexts and accounting for socioeconomic and cultural nuances.

Robust regulation of AI throughout the life cycle: Countries need to create robust regulatory processes that have a clearly defined scope and intention, recognising the distinct nature of AI-driven technologies within regulatory models and delineating responsibility for each stage of the AI life cycle. These processes need to be transparent, proactive and flexible.

Engagement

Design with users: The patient, healthcare professionals and relevant stakeholders need to be involved in the design of AI-driven technologies from the start to ensure the resultant product or service meets clinical, user and professional needs and complements existing workflows and experiences.

Demonstrable benefit: Countries should focus on engaging and generating trust with the public, healthcare professionals, industry, and other stakeholders through delivering AI-driven technologies that are concentrated on meeting a need(s) within the health system. Doing so moves the conversation about the public acceptability of AI away from the theoretical to one of showing the benefit and value AI-driven technologies bring to the health system.

Invest in education: Countries need to invest in wider public, professional and industry education on what is classed as AI, how AI-driven technologies are currently used in the health system and other industry, and what the benefit is to the end user especially compared to conventional methods.

Want to learn more?

You can read our full white paper, AI for healthcare: Creating an international approach together (PDF, 3.9MB).

The NHS AI Lab thanks the GDHP for supporting us to conduct this research during what is a difficult time for the international health community. We hope the insight and recommendations shared in this paper are useful for all countries for ensuring the safe adoption of effective AI-driven technologies that meet the needs of patients, the public, healthcare professionals and the wider health system.

Within the UK, we look forward to working with our partners to embed these findings into our thinking and programmes. The NHS AI Lab is committed to continuing to learn from our international colleagues and to bringing better and safer access to AI-driven technology for our NHS and it’s patients.

Download full PDF (PDF, 3.9 MB)